The future brings with it all sorts of exciting opportunities for improved efficiency; screens are getting bigger, microchips smaller, and everything is getting faster.

Keyword research is no different From The Future, the process has become more streamlined than ever before to get meaningful competitive intelligence into a digital vertical market – and pretty darn fast.

I’m going to take you through a process using Ahrefs Keyword Explorer to quickly pull out all the stops and:

- Gather a meaningful population of relevant keywords.

- Filter for modifiers.

- Quickly prioritize terms based on volume and competition.

- Infer and tag terms for intent.

- Use this data to design a short-term SEO content map.

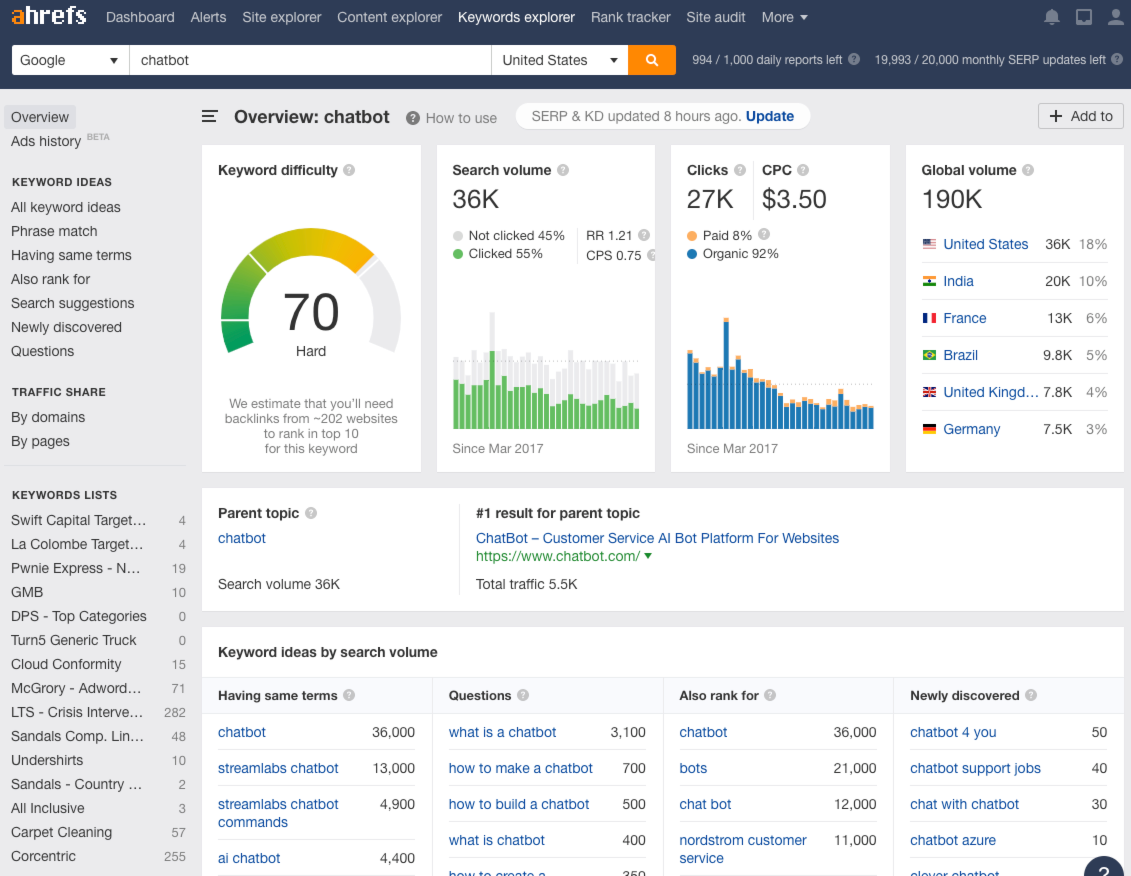

To make this process as tangible as possible, I’m going to actually run through it for real. I’ve decided to use a very competitive head term keyword; chatbot.

Please Note

This process is not to conduct comprehensive keyword research, for that I’d encourage you to read about how to calculate a total addressable market. Instead, this is a simple (modern) process for getting moving, and demonstrates the thought process and approach to identify opportunities that can kick start your keyword strategy.

If you’re specifically looking to optimize bottom-of-funnel pages, consider reading about keyword research for conversions (it focuses a lot more on identifying purchasing intent at later stages in the customer journey.

Getting Started

To dive in you’ll need to start by selecting a “seed” keyword, ideally this is a really high-level 1 or 2 word term that represents the vertical market you’re looking to target at 50,000 feet.

Examples of seed terms across a few different verticals are:

- Shoes – Ecommerce

- Personal injury – Legal

- CRM – Software

- Student loans – Financial

- Vet tech – Education

- Vyvanse – Pharmaceuticals

- Plastic surgery – Medical

Again, for the purpose of demonstrating this particular process for keyword research, I’m going to use chatbot.

Step One

Drop that bad boy into Ahrefs Keyword explorer:

In this case, the term is already the parent topic – if it’s not, re-run your search using the parent topic. This is important because at this stage we want to get the largest volume term to cover as much of the competitive landscape as possible.

Step Two

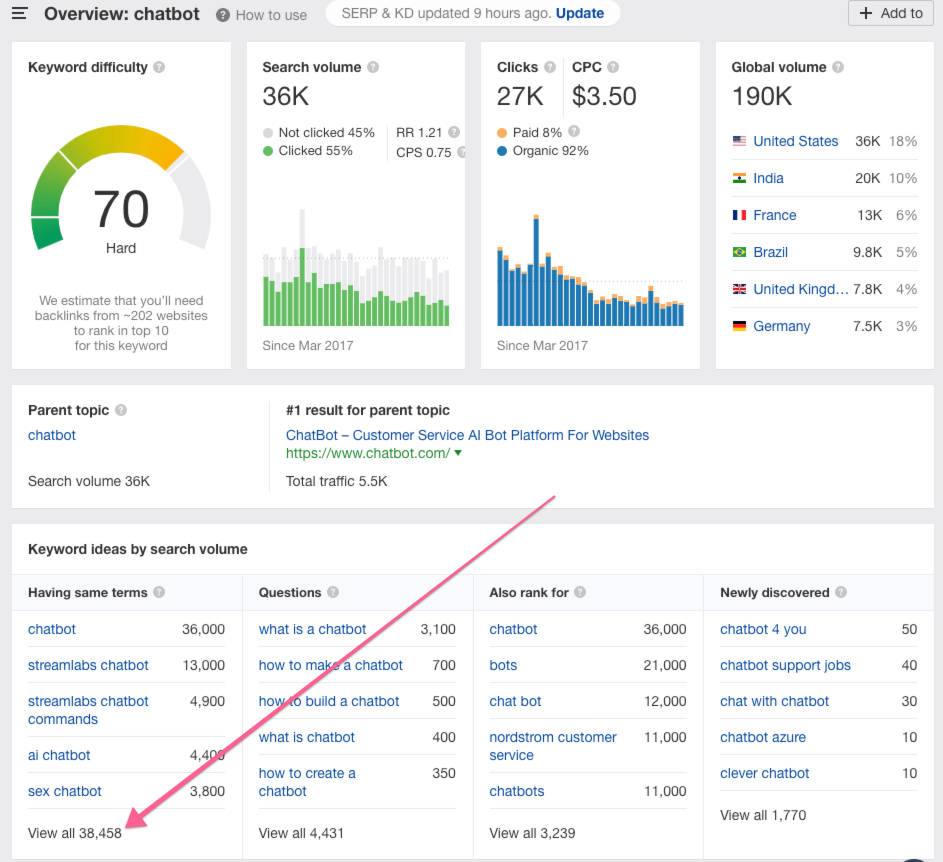

Click the “View all” link under the far left column titled “Having same terms”

Step Three

This is a very important, but nuanced step.

Instead of the more traditional approach of sorting your term population by search volume (“Volume”), at time when nearly 50% of all searches do not result in a click, instead sort sort descending by Clicks:

Step Four

As you scroll down through your list, pay attention to the large disparities between Volume and Clicks; it’s kind of incredible.

Here’s an example of terms that actually result in more clicks than searches (meaning that the searchers are bouncing back to the search results and clicking more than one result):

What you’re doing now is reviewing the Click volume across all the terms with the most clicks, to understand how big of an export pool you’re going to need.

Ahrefs shows 50 results per page, and the “Quick export” feature allows you to export the first 1,000 rows – so you need to determine if you need more than the first 1,00 rows of term data.

For the purposes of this post, I’m not interested in any keywords with less than 100 clicks/month, so by page 3 of results I’m out of the terms I want to grab data on (for now).

What this also means is that of the 38,458 keywords that Ahrefs has in their keyword index that include the word “chatbot” less than 150 of those terms get more than 100 clicks/month.

Crazy right?

Step Five

This is also really important, mostly for your credit balance (and bank account), but when you go to export your results make sure you select Custom under “Number of rows” (and set the number of rows that meet your click threshold, so for me in this case it’s 150) – and then check the box for “Include SERPs.”

If you don’t select “Custom” and limit the rows, and just let it default to the “First 1,000,” when you select “Include SERPs” you just ate up ~100,000 of your monthly export credits – which would mean in this case instead of me using just ~15,000 credits (150 rows x 100 results per SERP), I just lit ~85,000 credits on fire.

Now You Have Your Data

You’ve exported your CSV file, and now it’s time to go to work.

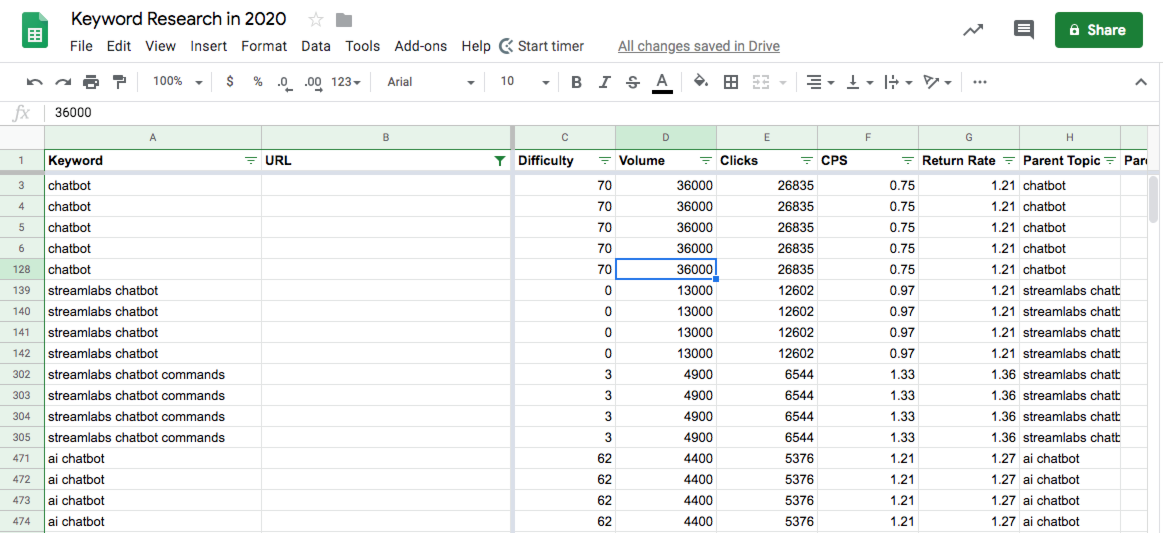

I personally prefer Google Sheets for data sets this tiny (again, this is only ~15,00 rows; 13,035 actually) but you may need to use Excel depending on how big your export file is.

Some of the beautiful URL-level data we now have is:

- Keyword – The query itself.

- Ranking URL – The current ranking URL for the query.

- Difficulty – How difficult is is to rank for the query on a logarithmic scale of 100.

- Volume – The estimated monthly search volume for the query on the local index.

- Clicks – The estimated number of clicks the search query generates per month on the localized index.

- CPS (Clicks/search) – The estimated clicks a user makes when searching the query.

- Return Rate – The estimated number of times the user returns to the search results for the query.

- Parent Topic – The parent topic (if applicable) the query would fall into in terms of contextual relevancy.

- Parent Topic Volume – The estimated monthly search volume of the parent topic term.

- Last Update – The date all of the above data was collected or calculated.

- RDs – The number of root domains linking to the ranking domain.

- DR – The Ahrefs “Domain Rating” score out of 100 for the domain’s authority.

- Organic Traffic – The estimated number of organic visits the ranking URL receives per month from the query.

- Ranking Keywords – The total number of keywords the ranking URL ranks for organically.

- CPC – The average cost per click from AdWords for the query.

- Position – The current position the ranking URL was in when the data was last updated.

- SERP Features – The types of features Google is currently displaying in the results page for the query.

Initial Sanitization

We also have some column cruft we want to dump (delete), so you can remove:

- Country – Useless unless you’re planning on blending data from various country-specific indices

- Backlinks – Doesn’t populate in this export

- URL Rating – Doesn’t populate in this export

- Top Keyword – Doesn’t populate in this export

- Top Keyword Volume – Doesn’t populate in this export

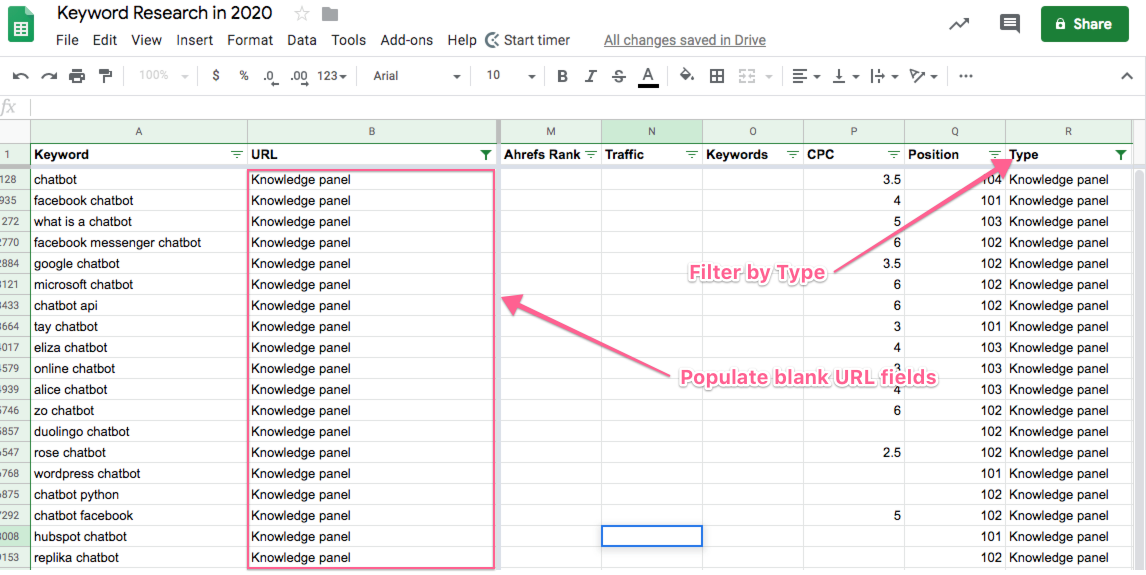

In addition, you’re likely to have a bunch of blank URL fields in column B, and we’ll want to tag these.

These are the result of Google UI features like People Also Ask and Knowledge Panels, so we want to label them as such. The easiest way to do this is to select Row 1, then click Data > Create a Filter, then click the filter in Column B > Filter by Value > Clear > select “Blanks,” and it should look like this:

Tip: drag the thick light gray cross-panes under Row 1 and between Column’s B & C to set those to sticky for this next part.

Now scroll all the way over to the right so you can filter by the Google Features and tag the URLs:

You don’t have to do this, I just don’t like blank fields in my datasets 🙂

But, make sure once you’re done you go back to the URL column (Column B) and reset the Filter to “Select All” to make sure you’re displaying all your keyword rows before you move on.

Formatting for More Efficient Consumption

Some of the data in here is going to be more important for us than others, so I like to move around the columns a bit to make it simpler for me to see the stuff I care about most.

My preferred order for columns is:

- Keyword

- URL

- Position

- Clicks

- Type

- Difficulty

- RDs

- DR

- Traffic

- Keywords

- CPC

- Volum

- CPS

- Return Rate

- Parent Topic

- Topic Volume

- Last Update

Filtering for Modifiers

Keywords are comprised of classes and modifiers.

A query class most often times actually contains a root term and a modifier, as AJ Kohn so eloquently explains in this post, and then modifiers tend to show up a prefix (before the root term) or a suffix (after the root term), which fascinatingly enough can actually alter the intent — but that’s a discussion for another day (and another post).

What we want to do is to filter our list of keywords based on modifiers they contain, so we can tag the terms for the modifiers and begin to look for patterns to inform our overall keyword strategy.

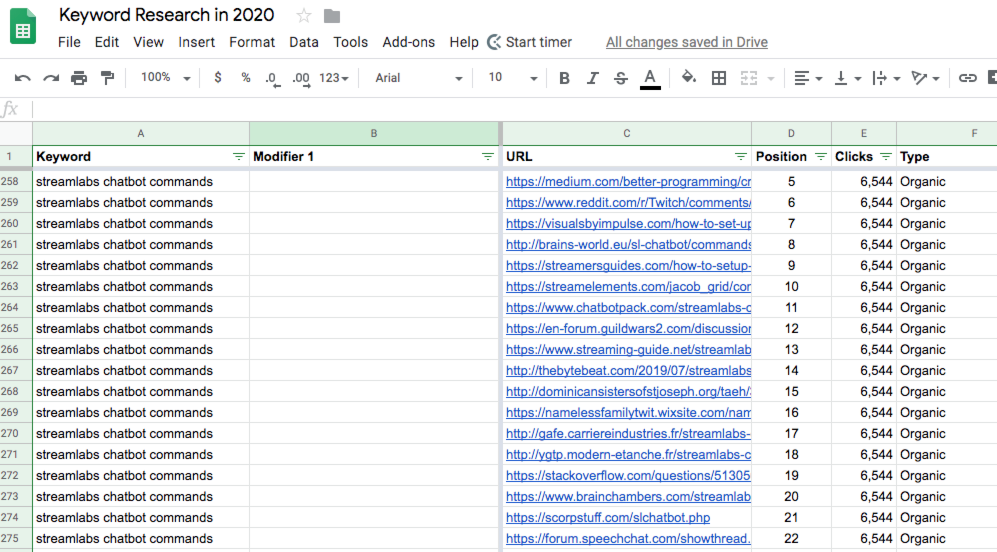

The way we’re going to do this is one column at a time, starting with adding a new column between A & B called Brand Modifier.

Tagging for “Brand modifiers,” i.e. any instance where a brand name is being used within the keyword. These represent searchers closer to the bottom of the conversion funnel and hence need to be treated with different considerations – which I’ll get into a bit later in this post.

In the screenshot below you can see the brand modifier “streamlabs” in use:

There are significantly more efficient ways to run macro’s and other fun automation scripts to speed this whole piece up but to keep this post SUPER simple, and you’re just going to use the Filter in the Keyword row for “Text contains” and enter in 1 modifier at a time – and filter through your list.

So I’m going to run through this list and tag terms for brand modifiers when present. A sample of the brand terms I found are:

- Streamlabs

- Microsoft

- Mitsuku

and a handful of others. The reason it’s important to tag for branded terms is generally speaking these are not going to be worthwhile targets for an SEO strategy.

It’s really the non-branded terms we want to uncover, and that’s where there’s a bit of nuance to be aware of…

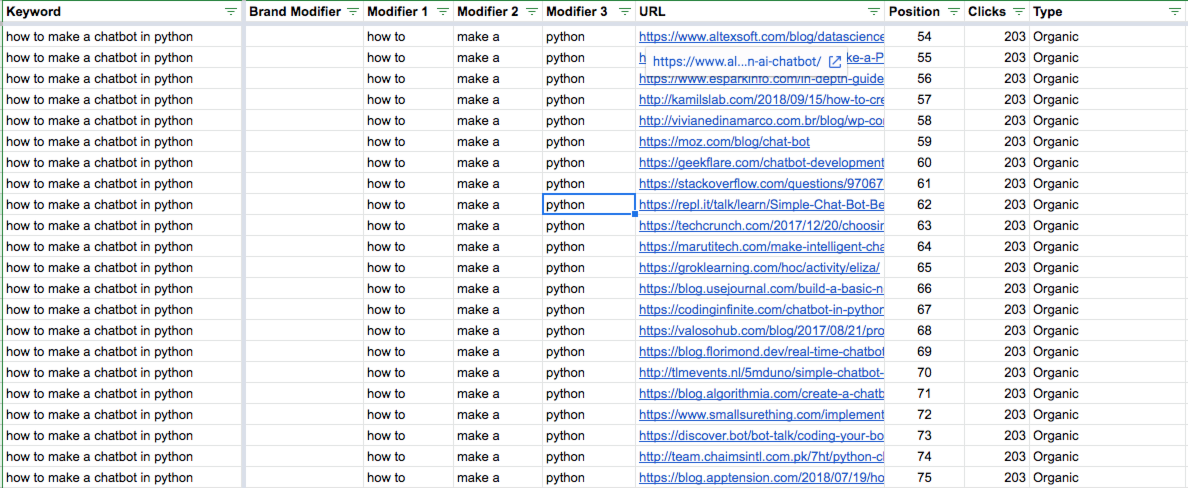

For example, for the keyword “how to make a chatbot” that would actually break down into:

- chatbot – root term

- how to – modifier 1

- make – modifier 2

The reason I’m separating “how to” out of “how to make a” is because it’s highly likely we’ll run into other keywords that contain both of those modifiers, so they should be organized separately.

The next step is to add additional columns for the modifiers so they can all be broken out, and to continue to filter for them and tag them.

Now we want to filter down the list for non-brand terms, to see what our top of funnel opportunities might look like. I have also learned that there are far more adult applications for chatbots than I ever knew (or cared to know) existed…

But reviewing the top of funnel, non-branded, non-adult modifiers, I’m left with:

- what is

- website

- visual

- tutorial

- tensorflow (coding language platform)

- software

- smartest

- real estate

- python (coding language platform)

- open source

- online

- most + advanced (2 separate modifiers)

- maker

- machine learning

- javascript

- icon

- how to + make/build/create

- free

- examples

- definition

- create

- deep learning

- commands

- builder

- best

- app

- api

This modifier set shows me that there’s still a lot of confusion in the industry about what they are, what they look like, and how to use them. I honestly expected to see more use case modifier, like real estate for example, and am especially shocked I didn’t come across “ecommerce” and “lawyers” as modifiers within this term population.

Prioritizing Your Keywords

Keeping the non-brand modifier filter on, next we want to narrow dow the field for opportunities for page 1 rankings to explore.

If you were wondering why you needed to export all that SERP data, here is where we put it use 🙂

To do this, add the following column filters:

- Column: Position – Filter by Condition > Less than or equal to: 10

- Column: Difficulty – Filter by Condition > Less than: 20

- Column: Traffic – Sort Z -> A

Now we add in a new column, right to the right of the Keyword column (Column A) called “Priority.”

Scroll through your list (leaving the above 3 filters and sort in place) and start tagging Priority terms by dropping a “y” into the Priority column. You’re looking for any modifier that might be applicable (in my case right now I’m literally just ignoring a mess of adult modifiers :/ ).

By pulling out all the term modifiers in your sheet, and adding some simple filters around impact (traffic) and difficulty, you’re able to really quickly find opportunities worth exploring.

Here’s what mine is looking like:

Infer and Tag for Intent

This is where it gets interesting, because in previous keyword research processes I’ve designed, I would tag for intent prior to prioritizing. What I’ve learned over the years is that while that process is still sound, you may end up with opportunities that are so difficult to rank for due to steep competition, that you don’t have a good idea of where to start.

So this flips that order on it’s head and has you think about it a bit differently.

For the purposes of tagging these terms for intent (and keeping it SIMPLE), I’m going to be using the more old school buckets of

- Information

- Investigation

- Transaction

If you want to read the new school take on how to better assess intent in 2020, check out this post from Kane Jamison.

My criteria for tagging each is:

Informational

These are terms that lack almost any context, where it’s clear the searcher is at the very beginning of their information gathering journey and are looking for directional results to help them better inform and refine their query.

Based on my list of non-branded chatbot modifiers, this includes:

- visual

- machine learning

- deep learning

- examples

- app

- website

Investigational

These are term modifiers that show a more specific use case, or at the very least lend a bit more context to specifically what the searcher is looking for.

Based on my list of chatbot modifiers, this includes:

- tensorflow

- real estate

- python

- commands

- alice

- javascript

- software

- open source

- most

Transactional

These are modifiers that indicate the searcher knows exactly what they’re looking for and is ready to make a decision, so for my list these terms are:

- tutorial

- how to + make a + python

- setting up + {brand}

- {brand} + scripts

- how to + use + {brand}

- icon

If you’re curious why I chose to tag any of the specific modifiers as the intent I did, drop me a comment and I’ll respond with more details 🙂

Design Your Short-term Content Map

Designing your SEO content map is just another way of saying find themes that have organic search volume / clicks that you can target with well executed content.

Aside from updating existing pages to improve keyword targeting, expanding your keyword footprint by creating new content is ultimately how you win at direct rankings and even execute on the SEO monopoly strategy.

This all comes back to the word themes. In other words, where can you find terms that have enough semantic overlap that you can build your requirements for a piece of content to target as many relevant, valuable terms as possible.

Identifying Thematic Patterns Among Your Priority Keywords

SEO targeting is no longer just about keywords, because like all modern practices, it has evolved.

Modern SEO requires a broader focus on concepts that are represented by topics. SEO has become far more about addressing all of the content needed to create relevance, than it is about stuffing loads of terms into a page to try to rank for them and their counterparts.

This means you need to think more so in terms of themes that you can fit your target keywords into, and use these term groupings to design your content.

Looking across the term modifiers I uncovered throughout the example in this post, and grouping them into “themes” leaves me with:

- Chatbot + App + Examples

- Visual

- Machine Learning + Deep Learning

- Tensorflow

- Python

- ALICE

- Javascript

- Open Source

- Chatbot + Commands

- Streamlabs

- Microsoft

- Mitsuku

- Tutorial + How to make a chatbot

- Setting it up

- Software

- Scripts

- API

Then there’s some outlier content you could create if you needed to around free, best, smart, and icons.

But the thematic groups above identify opportunities for 3 large pieces of content that have a solid chance to rank (if properly executed) for the biggest opportunities in this vertical based on our process for breaking down search trends using term modifiers and competition.

Bonus

If you want to grab the source data file I used to write this post, here you go